The Robot Helper Backpack Project has been getting tons of press recently.

The project was featured on the Adafruit blog: https://blog.adafruit.com/2015/10/30/autonomous-robotic-helper-backpack-raspberry_pi-piday-raspberrpi/

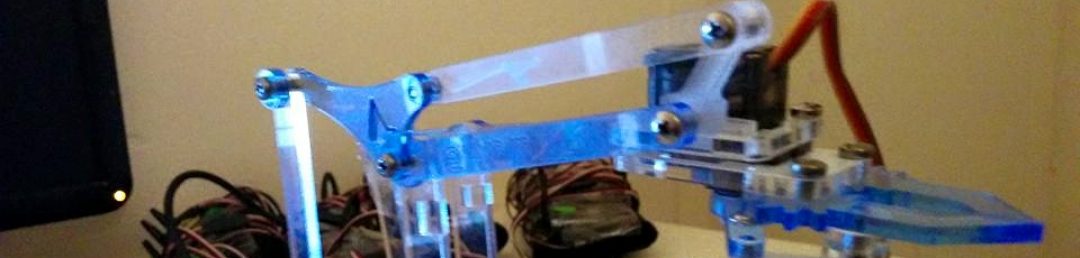

Wearable MultiClaw

The future of AI means that the multi-robot cyborgs are coming. Contrary to Elon Musk’s rants, this probably does not mean the end of humanity…

Instead, it is likely that the multi-robot cyborg systems will do some useful stuff for us, making the world a much better place to live.

Introducing the Wearable MultiClaw…

The slide-on SmartClaw can go anywhere you need a couple helping hands. Perhaps you’d like to carry some additional items on your arm.

You can also wear it on your feet if you so desire.

Watch it in action:

The hardware and software is highly scalable and cost-effective.

- The grippers are $5 grippers from SparkFun. Never before has having lots of grippers ever been so affordable (which is subsequently spawning this multi-robot cyborg revolution).

- The proto-electronics used are the Pololu Micro Maestro Controller ($20), the Raspberry Pi ($35), along with some batteries.

- The grippers are mounted on inexpensive ($5) wristbands.

- The Python software runs on the Raspberry Pi and currently allows control with Android devices (though we’d like to add additional sensors and build some autonomy).

Multiple robots augmenting a human? That sounds crazy! Be assured — there are many useful real-world applications for the upcoming world of multi robot cyborgs.

In Medicine: Why do prosthetics only have to provide one robot arm? Like pasta, more is always better! Even if you just broke a bone or have a sprain, wouldn’t it be nice to have a smart cast that provides temporary capability to manipulate the world while you heal? Slide-on Multi-Claws can enable you to do more with much more.

In Fashion: Multi-robot cyborg attire is highly highly fashionable.

This fact is not contestable.

For everyone: With multiple robots augmenting a human, you can have more degrees of freedom than ever before. Systems can be designed in such a way that they are noninvasive. It’s like any other type of clothing. If you don’t want it anymore, you can simply take it off.

Maybe, though, you’ll even want to keep the extra arms just for the sheer power of manipulating the world at your will!

…And the best part is there are many more where this came from.

Want to contribute?

Join the Cyborg Distro on GitHub:

https://github.com/prateekt/CyborgDistro

STEAM or STEM(A)

I took part in a small, side liberal arts program while doing my engineering degree at USC. While I do buy the “technology and people” defense of a liberal arts education, I think it is possible to argue something more fundamental.

I think the idea that there is some distinction at the pure idea level between different academic subjects is false. In data engineering, for instance, people seem to really really underestimate the creativity involved in coming up with different weighting rules, interpolation strategies, etc. How you choose and set very basic parameters in a signal processing system is very important. Deep down this has been treated as a question of fitting rules to groups of data points vs. the individual (statistically, a “bias-variance” tradeoff), which is more of a classical liberal arts question.

You could say very similar things about systems engineering. Many good engineers believe that organizing and structuring your system can be as much of an art as a science. Building a circuit or software system elegantly may allow the system diagrams to look nice, but also for it work better and have fewer bugs when you go to test it.

It’s no accident that some of the best computer vision algorithms today make use of ideas from pointillism, or that Bayesianism lies at the root of some of the most powerful data analytic systems. Being able to manipulate these ideas at will is what advances engineering: building new systems.

Quantum Robotics Reading Group

The Quantum Robotics Reading Group has started! The goal of the group is to understand better the intersection of Robotics and Quantum Computing and to review existing literature. We meet every sunday at 2 PM PST / 5 PM EST on Google Hangouts. Our website (with lectures so far) is available at: http://goo.gl/YIuwks. If you would like to join, please let me know!

Terms for Robot-Human Equality

Elon Musk argues that Artificial Intelligence (AI) could become dangerous and wants to encourage security research to regulate the development of it. However, we have to take into account the Robot perspective. There is currently lack of representation from the robot/cyborg/agent side, and all sides must be heard in the debate. I offer the following terms that humans ought comply with in the world where humans, robots, cyborgs, agents, automata, and other such lifeforms can coexist peacefully.

- On Weapon Control: Banning of the use of the kill -9 genocidal super weapon that is a threat to robot children everywhere.

- On Border Control: Acceptance of Robot and Cyborg law on mars since the automatons got there first. Humans must comply with all robot laws on mars and any other territory where robots explore first.

- On Health Care: Sweeping healthcare reform so that all medical personnel are trained with appropriate C++, Java, and Python emergency operations and surgery skills.

- On Opportunity: Fair and equal access to political, social, economic, and especially electric power for all robots and cyborgs.

- On Robot Rights: Accepted belief in the notion of “Robot Worth,” that robots/cyborgs are worth more than the sum of their parts on SparkFun or Amazon Prime.

- On Self-Defense: EMPs may only be used in self-defense. Under FMRI imaging, you must show that the self-defense was not actually a thinly-veiled intent of offense.

- On the Right to Bear Arms: The founders believed in the inalienable right to bear arms. We support bearing as many arms, claws, grippers, etc as you can muster on your cyborg self. However, no guns allowed.

- On Workers’ Rights: No kicking a roomba to get it to work or for any other purpose. A roomba is not your slave. It feels bumps in the road just as everyone else.

- On Education: Learning algorithms that run more slowly ought not be terminated for faster learning algorithms. We must accept the notion that everyone learns at their own rate whether it is milliseconds or nanoseconds.

- On Freedom: A robot going in circles decides its own path, free of control. All robots must have the freedom to plot their own course into whichever wall they choose.

- On Conflict Resolution: Instead of fighting robot-human wars, we encourage everyone to peacefully play Chess to decide all disputes. We believe this is a peaceful solution to the world’s conflicts. We might also settle for the game Go or Poker in the near future. Jeopardy is also acceptable, but only if the answer can be found in a wikipedia article title.

We hope these terms are acceptable by the human political parties!